| Issue |

J. Eur. Opt. Society-Rapid Publ.

Volume 22, Number 1, 2026

Recent Advances on Optics and Photonics 2026

|

|

|---|---|---|

| Article Number | 22 | |

| Number of page(s) | 23 | |

| DOI | https://doi.org/10.1051/jeos/2026009 | |

| Published online | 09 April 2026 | |

Review Article

The utilization of novel immersive glasses-free stereoscopic display technologies in the modern exhibition industry

1

School of Cultural Industries and Tourism, Xiamen University of Technology, Xiamen 361024, Fujian, PR China

2

School of Optoelectronic and Communication Engineering, Xiamen University of Technology, Xiamen 361024, Fujian, PR China

* Corresponding author: This email address is being protected from spambots. You need JavaScript enabled to view it.

Received:

8

December

2025

Accepted:

3

February

2026

Abstract

Immersive glasses-free display technologies provide stereoscopic visual experience for multiple users without the requirement of specialized glasses. By accurately customizing light fields, stereoscopic display can replicate realistic three-dimensional scenes with motion parallax and depth cues. The amalgamation of stereoscopic display technologies, computing techniques, exhibition design, information and communications technologies (ICTs) are widely used in conference and exhibition centers, museums, libraries, art galleries, archive centers, and so forth. These innovative models of modern exhibition industry reshape the organizational structure, improve working methods and business processes for upstream and downstream enterprises. Immersive glasses-free stereoscopic display technologies consist of holographic 3D display, optical illusion display, projection stereoscopic display, floating 3D display, and light field 3D display. This paper analyzed the aforementioned technical background, technical principles, and typical application scenarios. Then, the advantages and disadvantages of each technology in key performance indicators, including resolution, color reproduction degree, and field of view angle were compared. Also, the future trends, limitations and potential improvement directions in practical application were discussed. This study explores the application of immersive glasses-free stereoscopic display technologies in meetings, incentives, conventions, and exhibitions (MICE) to improve audiences’ immersive experience, providing practical insights into such technologies as a critical basis for sustainable exhibition service.

Key words: Stereoscopic display / Immersive technology / Exhibition industry / Smart MICE / Glasses-free / 3D display

© The Author(s), published by EDP Sciences, 2026

This is an Open Access article distributed under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

This is an Open Access article distributed under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

1 Introduction

With the rapid development of information and communications technologies (ICTs), the creation, production, and dissemination of culture and art are constantly changing, leading to an increasing demand for dynamic audio-visual effects in modern exhibition industry. As an emerging key production factor, data has altered the structural elements, value creation and operational logic for traditional cultural industries, tourism and exhibition industry [1]. Immersive experience has gradually become a new and progressive business form by the integration of exhibition, art and technology. Modern exhibition industry has made a breakthrough in the traditional ways of display and demonstration, and has entered into a new stage of panoramic, immersive and overwhelming style [2]. How to use technologies to create immersive and interactive experience, provide high-quality exhibition content and imaging effect, and improve the service quality for the exhibition industry, has become a critical focus of research for scholars and practitioners.

Information transmission in traditional exhibitions primarily relies on textual and audio-visual content displayed on screens, failing to meet the expectations and needs of modern visitors. With the application of digital technologies, the exhibition model has undergone a paradigm shift from static viewing to multi-modal interactive experiences. This new paradigm emphasizes visitor participation and multi-sensory stimulation, encompassing sight, touch, hearing, smell, and sometimes taste [3]. Compared to traditional audio-visual technologies, modern exhibition and display technologies bring more comprehensive visual experience in the combination of ICTs, optical technology, computer science, landscape design, and artistic display [4, 5]. The novel display technologies enable visitors to actively participate in narrative construction for exhibitions through interactive behaviors. The interaction has been identified as a critical factor in enhancing the immersive experience in the aspects of education, entertainment, socialisation and escape [6]. 3D spatial stereoscopic display technologies are characterized by their interactivity, immersion and real-time, and become one of the core technologies among a great deal of high-tech display solutions in the field of exhibitions [7–9]. Moreover, advances in semiconductor light sources, operating speed in computers, storage media and optical system are expected to reduce the cost of 3D laser holographic technology gradually. Stereo-related technologies have been widely used in the contexts of antique shows, stage performance, art and commercial exhibitions, as well as in the venues of conference centers, exhibition centers, museums, fairs, libraries and archives [10].

2 Relevant technologies overview

2.1 Immersive technology

Immersive technology refers to the optical and digital systems allowing users to experience and interact with three-dimensional (3D) environments or virtual objects through multisensory engagement. These technologies enhance the sense of presence by integrating stereoscopic displays, spatial computing, and multisensory feedback [11]. The incorporation of stereoscopic display techniques – such as holographic displays, optical illusion displays, projection displays, floating 3D displays, light field 3D displays, and augmented reality (AR) – is crucial in creating realistic and dynamic environments for interactive exploration.

Immersive technology includes four key characteristics. The first one is virtual or true stereoscopic depth perception. By employing techniques such as holographic display, binocular disparity, projection display, floating 3D display, or light field 3D display, immersive technologies enable users to perceive objects within a stereoscopic space, thereby generating a virtual or true experience of depth. The second one is about multisensory feedback. These systems are built upon an integration framework which combines visual, auditory, haptic, and occasionally olfactory cues to simulate lifelike experiences. The third one is related to real-time interaction. Immersive technologies provide interactive capabilities that enable users to navigate freely while simultaneously engaging in real-time manipulation and interaction with virtual objects in real time. The last feature is spatial computing. This aspect involves the integration of stereoscopic environments with motion-tracking sensors which enables systems to deliver a responsive and dynamic virtual world [11, 12].

2.2 Interactive technology

Interactive technology refers to the digital systems and devices facilitating users engagement and manipulation of digital content or environments through multi-modal input interfaces, including touch, voice, gesture, or even neural signals [13, 14]. Unlike traditional one-way communication systems, interactive technology establishes a two-way interaction channel, enabling the system to respond to user inputs, provide instantaneous feedback and recalibrate its behavior based on received directives.

Interactive technology includes the following four key features [13, 14]. The first is user input. This feature allows users to provide commands or inputs through various interfaces, such as touchscreens, motion sensors, voice commands, or gesture recognition. The second one is real-time feedback. This indicates that the system responds instantly to user input, such as providing immediate feedback, modifying environmental states, or adapting interface elements. The third one is immersive experience. In certain systems, interactive technology is employed to construct immersive environments through the application of stereoscopic optical devices, virtual reality (VR) or augmented reality (AR), thereby enhancing user involvement. The last one is dynamic adaptability. This technology is capable of dynamically adjusting and optimizing exhibition content in response to user behaviors, developing a more personalized and adaptive experience [15].

2.3 3D Display technology

Two-dimensional (2D) displays are the most widely used display technology nowadays. The images are represented in two dimensions, only providing the height and width, but lacking the deeper elements that endow objects with three-dimensionality in the real world [16].

3D display technologies refer to a collection of optical, computing and digital techniques used to capture, process, and present three-dimensional information. Compared with traditional 2D display technologies, 3D display technologies provide critical support for more realistic visualization and in-depth analysis of objects in the real physical context by retaining the three-dimensional attributes (i.e., depth, volume and relative position). These technologies reconstruct three-dimensional scenes by arranging light-emitting pixels in a spatial array. In contrast to virtual stereoscopic display technologies, 3D display technologies exist in physical space, accurately reflecting the dimensions and spatial relationships of objects [17].

3D display technologies are mainly implemented based on the following key principles. The first principle is depth perception. It can capture and reconstruct depth information through optical or computational methods. The second one is multi-viewpoint display. It acquires image data from multiple angles to simulate a real 3D perspective. The third principle is about volumetric reconstruction. By means of technologies such as light fields, structured light, or tomography, a true 3D model with spatial structure is completely constructed [18].

2.4 Stereoscopic display technology

Stereoscopic display technologies refer to a range of optical and computational techniques that generate and display 3D images by creating depth perception in the human visual system. They encompass both real 3D images and virtual images, produced by exploiting the optical illusions of the human eye. These technologies utilize methods such as holographic displays, binocular disparity, projection displays, light field 3D displays, or floating 3D displays captured or rendered from distinctive viewpoints, and present them to each eye, thereby assisting the brain in reconstructing spatial depth and a sense of realism. The features of 2D displays and stereoscopic displays are summarized in Table 1.

Immersive display technologies refer to advanced 3D stereoscopic systems that combine depth perception with interactive and immersive environments. They create visual or true effects with stereoscopic depth by optical technologies. Meanwhile, they enable user interaction via motion tracking, gesture recognition, or eye tracking. The combination enhances the realism and interactivity of stereoscopic content, making it possible for users to experience fully immersive virtual or augmented environments. The in-depth application of immersive technologies in the modern exhibition industry has fundamentally revolutionized how audiences experience events, shows and activities. With the rise of technologies like projection display, floating 3D, binocular parallax stereoscopic display (virtual reality, augmented reality, mixed reality), the static display modes of traditional exhibitions have evolved into an immersive experience paradigm that combines dynamic interactivity and strong appeal [19].

Stereoscopic display technologies can be divided into two main categories: glasses-wearing and glasses-free systems. Glasses-wearing stereoscopic technology typically relies on specialized displays or eyewear, such as stereoscopic glasses, polarized glasses, red-blue glasses or shutter glasses, to deliver different views to each eye, thereby creating a sense of depth and a stereoscopic effect. This type of technology is commonly used in cinemas, TVs, and certain VR headsets [20]. When using stereoscopic display technology with glasses, there will be disadvantages such as discomfort and inconvenience. Moreover, this technology is restricted in terms of viewing angle, viewing method, and R & D costs. First, prolonged viewing can lead to visual fatigue, headaches and dizziness. Second, people who already wear glasses will face an extra burden and inconvenience when required to wear supplementary specialized glasses. Third, glasses-dependent stereoscopic display technology has strict requirements for viewing angles and distances. Fourth, this technology cannot enable collective viewing experiences essential to modern exhibitions. Fifth, this technology usually requires specialized hardware devices (e.g., dedicated eyewear or screens), increasing the costs and technical complexity of the system [20, 21].

Glasses-free stereoscopic technology employs specialized optical designs (e.g., parallax barriers, lenticular lenses, floating 3D display, and holographic display) to enable three-dimensional visual experience without requiring users to wear specialized eyewear [22]. By obviating the need for supplementary eyewear, the approach of glasses-free stereoscopic display alleviates discomfort while facilitating unrestricted movement and immersive stereoscopic experiences without physical interference. Furthermore, this technology supports multiple people in viewing with the naked eye simultaneously, making a breakthrough for the single-viewer constraints of glasses-dependent systems. Therefore, it is particularly suitable for public spaces and exhibition scenarios.

3 Technical principle and application scenarios

Stereoscopic display technology is typically classified based on the underlying principles and perception mechanisms of the depth effect. According to the differences in technical implementations, it is generally categorized into three types: true 3D, pseudo-3D, and false 3D [23, 24]. In brief, true 3D display technology reconstructs physical objects in objective reality as seen in true holographic and light-field displays. Pseudo-3D display technology deceives the visual system into perceiving solid objects, such as in binocular disparity stereoscopic displays. False 3D display technology can still create a cognitive illusion of depth, even when recognized by the visual system as 2D images.

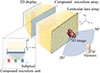

Here, based on practical application scenarios in the modern exhibition industry, this paper categorizes glasses-free stereoscopic display technologies into five types: holographic 3D display, optical illusion stereoscopic display, projection stereoscopic display, floating 3D display, and light field 3D display, as illustrated in Figure 1.

|

Figure 1 Classification of glasses-free stereoscopic display technologies. |

The features of holographic 3D display, optical illusion display, projection stereoscopic display, floating 3D display and light field 3D display are listed in Table 2.

3.1 Holographic 3D display technologies

As shown in Table 2, holographic 3D display technology demonstrates obvious advantages in achieving true 3D visualization through its complete wavefront recording and reconstruction capabilities based on interference and diffraction. Compared with other stereoscopic display solutions, this technology can provide a higher sense of depth reality and is regarded as one of the most promising true 3D display solutions [25].

Laser holography involves two fundamental processes: recording and reconstruction [25]. Holographic recording utilizes the interference properties of laser light, whereby the diffuse reflection from the surface of a 3D object interferes with reference light, creating a pattern of alternating bright and dark interference fringes. This intricate pattern is subsequently stored in various photosensitive media, including CCD cameras, photographic films, photorefractive crystals, photorefractive polymers, and photochromic materials. Through this process, comprehensive optical information pertaining to both static and dynamic objects – including amplitude (i.e., light intensity) and phase (i.e., depth) information – is meticulously and thoroughly recorded. Holographic reconstruction employs the diffraction characteristic of laser light. When a wavelength of the specific laser beam is directed onto the stored medium, the diffraction effect is excited to reconstruct the amplitude and phase distribution information of the original object wave. Consequently, a 3D image consistent with the original object is reproduced. Compared with traditional acoustical, optical, and electronic display methods, holographic 3D display technology effectively overcomes the bottlenecks of existing systems by producing high-contrast, high-resolution, and depth-perception 3D images, thereby achieving more realistic and immersive stereoscopic visual reproduction effects.

With the development of computer and display technologies, the traditional holographic recording process has been realized through computational simulation methods, thus leading to the development of computational holographic technology. This technology mathematically describes the complex wavefronts through numerical calculations, which are subsequently encoded into holographic functions compatible with the display media. During the holographic reconstruction process, coherent light is used to illuminate the display media, reconstructing the 3D light field information of the object. The advantages of computational holographic technology include: 1) eliminating complex optical interference processes, thereby simplifying the recording procedure and obtaining virtual interference images; 2) overcoming the limitations of traditional photosensitive media, making holographic functions easier to store, replicate, and transmit; 3) introducing computational and digital technologies into optical processing and control, thereby advancing the development of wavefront pattern control and holographic technology; 4) aligning with the practical demands of exhibition applications, providing large-angle, immersive, dynamic, and color 3D holographic technology, in order to enhance the effectiveness of displays and shows [25, 26].

Currently, holographic display technology faces several key challenges. First, the primary challenge is processing large-scale 3D scene data. Complex holographic scenes – especially real-time interactive and immersive applications – involve substantial data loads. This drives the urgent need to develop efficient, high-speed hologram generation algorithms. Second, the performance of spatial light modulators (SLMs) requires further optimization, particularly in terms of modulation capability, processing speed, resolution, and physical size. Third, computational encoding methods featuring high computational efficiency and superior reconstruction accuracy are essential for SLMs. Lastly, both display quality and system scalability still remain suboptimal. Current implementations are largely confined to laboratory settings and are not yet ideal for practical applications. Addressing these challenges requires the development of large-scale, wide-angle, and practical 3D holographic display platforms to improve the applicability of holographic technology in the real world [25–28].

The technologies of recording and reconstruction for static holographic images have become relatively mature and have been successfully applied in settings such as museums. However, strictly defined forms of holography – such as analog holography, digital holography, rainbow holography, and holographic video displays – which require detachment from traditional display media to form 360°, free-viewpoint images directly in mid-air, are still in the experimental research stage, as shown in Figure 2 and Table 3. These technologies remain at a relatively low level of commercialization, particularly within the context of smart MICE.

|

Figure 2 Schematic illustration of tileable holobricks. (a) A single dynamic holobrick structure. (b) A single static holobrick structure. (c) Spatial tiling configuration of two dynamic holobricks. (d) Spatial tiling arrangement of six static and/or dynamic holobricks for displaying a large-scale object [29]. |

3.2 Optical illusion display technology

By employing basic optical principles, such as visual illusion, reflection, and refraction, the optical illusion stereoscopic display technology generates stereoscopic visual effects. While it shares some similarities with holographic 3D display technology, it cannot fully capture or reconstruct all the optical information of an object, especially the phase information [23]. Optical illusion display technology includes specular optical illusion, rotating fan display, Pepper’s ghost display, and binocular parallax stereoscopic display. These specific features are listed in Table 4.

3.2.1 Specular optical illusion display technology

Specular reflection can create a stereoscopic effect through visual illusion, a phenomenon commonly observed in daily life. When light shines on the surface of an object, it reflects at a specific angle to form a mirror image. This reflection enables the perception of the object’s images. Under certain compositions and viewing angles, specular reflection generates an illusion of depth, leading to the interpretation of the image as genuine three-dimensional spaces. Such optical illusions significantly influence human perception and conceptual understanding of the world, causing deviations in observations and cognitive interpretations by “misleading” and “deceiving” the senses. Facade design can tactfully employ the principles of optical illusions to create remarkable visual and spatial experiences. As an artistic expression, optical illusion not only enhances our understanding of visual experiences, but also utilizes the elements of linear perspective, visual gradient, and shadows to construct stereoscopic spatial illusions on 2D surfaces [30]. For example, the “Cloud Gate” in Millennium Park Foundation, USA, demonstrates intensified luminance and visual vitality during the nighttime activation. The sculpture’s seamless curved surface optically converges ambient plaza lighting. This specular reflectivity magnifies the perceived brilliance and spatial vibrancy of the luminous surroundings [31].

Specular optical illusion display technology shows several limitations in constructing stereoscopic effects of objects. First, the effectiveness of stereoscopic reflective images is viewing-angle dependent, as the image may be invisible or distorted at a specific angle. Second, precise mirror alignment and detailed calculation of light reflection paths may increase both the cost and design complexity of the optical systems. Third, stray light and multiple reflections in the environment can reduce the clarity and stability of stereoscopic images. Fourth, the physical properties of reflected light impose constraints on the depth and width of stereoscopic images, especially limiting the representation of complex structures. Lastly, surface quality defects directly reduce the quality of stereoscopic image effects [30].

3.2.2 Rotating fan display technology

The stereoscopic fan screen achieves a dynamic visual effect by equipping its blades with multiple sets of LED arrays on its blades. As the LEDs rotate with the blades at a specific speed, the human eye perceives continuous images formed by these LED arrays, as shown in Figure 3. Enhanced with stereoscopic vision technology, the system further generates an air suspension effect and displays three-dimensional images. Under low-light conditions, the high-speed rotation of the fan blades becomes invisible to viewers. This makes the blade surfaces, which originally serve as display media, perceptually “disappear”, leaving only the foreground LED images to be seen. Therefore, the illusion of stereoscopic imagery is created and suspended in real space [32]. Notably, rotating fan images are invisible from both the sides and the rear. The advent of this technology overcomes the limitations and monotony of traditional flat displays by enabling real-time synchronization and immersive experience, positioning it as a new trend in the commercial stereoscopic display industry [33].

|

Figure 3 Rotating fan and its visual display effects, Xiamen, China, January 2026 (source: authors). |

A simple image within a confined area can be generated by a single fan screen, while a larger, more expansive image can be created by seamlessly “splicing” multiple fans together. Depending on the installation method, these screens can be classified into landing-joint screens, lifting-joint screens, and curtain wall-joint screens. Various stereoscopic rotating fan technologies, including “splicing” and “connecting” screens, have been extensively applied across multiple sectors such as exhibitions, advertising media, retail environments, cultural tourism, and stage performances [34]. The rotating fan display technology generates visual effects through the rotation of blades, resulting in relatively simple technical requirements. To ensure viewers’ safety, the rotating fan blades must be enclosed within a transparent glass or acrylic casing, preventing accidental contact with the high-speed moving components. Additionally, the mechanical movement inherent to this technology may produce noise, which requires special attention in noise-sensitive environments, such as libraries and offices. Moreover, when capturing such displays with a smartphone camera, the frame rate and rolling shutter effect may interfere with the perception of the stereoscopic image, resulting in flickering or distortion. This is primarily caused by the discrepancy between the camera’s capture speed and the rotational speed of the fan blades.

3.2.3 Pepper’s ghost display technology

Pepper’s ghost technology with its long history and advanced technical development, is now widely used in various settings such as theme parks, haunted houses, stage dramas and other scenarios. A “semi-transparent and semi-reflective” film is positioned at a 45° angle to the stage floor, as shown in Figure 4. Images with predominantly black backgrounds, such as an actor’s performance or special visual effects, are projected parallel from above to the ground. Through this semi-transparent, semi-reflective film, the audience perceives these images as vertically oriented within the actual stage space [35].

|

Figure 4 The principle of Pepper’s ghost display technology. |

In 2023, Milka Trajkova et al. introduced LuminAI, a co-creative dance interaction system that integrates artificial intelligence (AI) technology with the Pepper’s ghost illusion, as shown in Figure 5 [36]. The core innovation of this system lies in the combination of classical visual illusion technology and a modern modular AI agent, constructing a five-module processing pipeline that includes perception, action segmentation, learning, transformation, and selection/generation. The system captures the dancer’s movements in real time through motion sensing technology and encodes the actions based on Laban Movement Analysis (LMA) theory, thereby achieving intelligent generation of dance responses. The system uses a lightweight Hologauze projection screen to display floating stereoscopic images, achieving an immersive dance interaction experience at a low cost. This work establishes a novel technical framework for human-AI collaborative improvisation, which is suitable for artistic performance, public exhibitions, dance education, and rehabilitation training. Thus, this demonstrates the broad potential of AI in enhancing human creativity and physical expressiveness.

|

Figure 5 A co-creative dance interaction system that integrates AI technology with the Pepper’s ghost illusion [36]. |

Another typical example is a stereoscopic advertising display cabinet designed based on the Pepper’s ghost optical principle. It projects floating stereoscopic images inside the cabinet by integrating stereoscopic modeling with real scenes. Following the stereoscopic display principle, the process begins with capturing product images and constructing a stereoscopic model, followed by integrating these elements into the scene to build a display system capable of presenting both static and dynamic visual effects of products. Unlike conventional stereoscopic displays, this technology enables multi-angle naked-eye viewing within a conical viewing zone – covering 180°, 270°, or even 360° – without requiring viewers to wear glasses [37]. With high fidelity and depth, it creates an immersive experience while offering outstanding product visibility and interactivity. Distinct from the mechanism used in a stereoscopic fan display cabinet, the Pepper’s ghost stereoscopic display cabinet employs a unique structural design, commonly configured in either upright or inverted pyramid orientations.

3.2.4 Binocular parallax glasses-free stereoscopic display technology

Binocular parallax-based stereoscopic display technology encodes and reconstructs the optical information of objects via “digital” or “physical” means to simulate human eye stereoscopic vision [38]. The principle stems from the human binocular parallax perception mechanism: due to the differences in viewing angles, the images captured by both eyes are slightly different. The brain processes these images and converts them into depth information, thereby achieving the distinction between the foreground and background distances as well as stereoscopic perception [38, 39]. However, although existing stereoscopic display technologies partially simulate this physiological mechanism, they are essentially different from the true 3D light field reproduction technology based on wavefront reconstruction [40]. Specifically, binocular parallax stereoscopic display still relies on imaging media, limited by auxiliary viewing devices, restricted viewing angles, and difficulty in presenting complex scenes in real-time [39].

Based on the spectroscopic methods, binocular parallax glasses-free stereoscopic technologies can be further classified into three categories: grating-based (light barrier technology), cylindrical lens-based, and directional backlight-based. A glasses-free stereoscopic display that utilizes grating technology has been developed by Ningbo Vision Display Technology Co., Ltd.. Compared to the other two approaches, the advantages of cylindrical lens-based stereoscopic imaging technology include no loss of brightness, compatibility with various lens types (e.g., cylindrical, trapezoidal, and triangular lenses), the ability to eliminate Moiré patterns, suitability for display screens of any size, and the capability to achieve multi-screen integrated configurations [41].

3.3 Projection stereoscopic display technology

In the field of cultural heritage tourism, projection stereoscopic display technology has emerged as a vital medium for reconstructing historical narratives and interpreting cultural memory. By means of spatialized visual representation and multi-sensory design, this technology enables the transformation of audiences from “observers” into “participants,” allowing them to transcend time and space in historical contexts. Specifically, in settings such as ancient city ruins, stereoscopic projections can accurately recreate historical street layouts, scenes of daily life, and key historical events. Accompanied by soundscapes, lighting, shadows, and interactive elements, an immersive cultural experience space is constructed, promoting the revitalization, transmission, and continuous reinterpretation of cultural heritage. On one hand, this technology endows static heritage assets with dynamic vitality, substantially enhancing the appeal of cultural tourism and having a guiding effect on younger cohorts. On the other hand, it also promotes the revitalization of cultural heritage and the reproduction of historical meanings [42]. Nevertheless, its widespread implementation encounters dual constraints stemming from technical barriers and ethical considerations regarding content creation. The inherent system complexity and prohibitive capital investment rely on professional design, construction and maintenance teams. Concurrently, content creators must maintain a delicate equilibrium between historical authenticity and performance engagement [43].

From the perspective of technical principles, projection stereoscopic display technology adopts advanced technologies such as stereoscopic projection and point cloud scanning to project images or videos onto the targeted surface, generating a realistic 3D effect. This technology precisely regulates the refraction and reflection of light, enabling audiences to view 3D images from various angles. It is particularly ideal for outdoor scenes such as buildings, plazas, squares and parks, enriching urban landscapes with unique artistic expression and visual appeal. When performing outdoor operations, specialized equipment is required, including a laser projector, a projection lens and a screen. Laser projectors must exhibit high brightness and contrast to enhance the realism of projected images. The selection and configuration of projection lenses require precise adjustment according to the projection area and distance to ensure clarity and color fidelity. Screen selection must account for external factors such as ambient light and wind force to ensure the stability and visibility of the image [43]. Based on the multiplexing techniques employed, projection stereoscopic display technology can be classified into two categories: multi-projection systems and high-speed projection systems [43, 44].

3.3.1 Projection of buildings, mountains and other walls

In the field of architectural projection, projection stereoscopic display technology utilizes projection techniques to cast light onto a building’s surface, achieving a spatiotemporal transformation from static structures to vibrant visual interfaces and creating stunning, immersive spectacles. Through the redesign of lighting settings, this technology significantly enhances the visual appeal of landmark buildings, artistic districts, and tourist attractions for the nighttime economy [45]. Similarly, mountain projection utilizes natural terrain as a medium, integrating light-shadow narratives with environmental aesthetics, to inspire the audience’s awe toward nature and a sense of immersion in the landscape, as illustrated in Figures 6 and 7. Furthermore, this technology demonstrates a wide range of media adaptability, extending its application to various carriers such as ships, vehicles, sculptures, and irregular-shaped objects, thereby providing innovative spatial media for commercial exhibitions and product launches.

|

Figure 6 Mountain projection with images, Wuyishan, China, July 2025 (source: authors). |

|

Figure 7 Mountain projection with Chinese characters, Wuyishan, China, July 2025 (source: authors). |

3.3.2 Projection of water curtain and fog curtain

The “curtain” of water curtain projection is composed of a high-pressure pump and a specialized water curtain generator. The water flows at high speed, forming a fan-shaped projection “screen”. A dedicated projector projects customized images onto it. Typically, the height of the water curtain exceeds 20 m and its width is between 30 and 60 m. After startup, the water screen projection can present striking 3D stereoscopic and spatial visual effects, as shown in Figure 8. When the audience enjoys this projection effect, the fan-shaped water curtain blends naturally with the night sky. The characters in the image seem to be soaring or landing from above, creating an ethereal and immersive experience. This technology is innovative and distinctive in effect, suitable for advertising and promotion, and ideal for large-scale festivals, open public squares and theme parks, etc. [46]

|

Figure 8 Water curtain projection at Sijiaojing, Liancheng, China, June 2025 (source: authors). |

The deployment of water curtain projection technology is subject to multiple constraints. First, the image quality shows high sensitivity to ambient illumination, with visibility significantly degrading under daylight conditions, thereby restricting applicability to low-lux or nocturnal environments. Second, the technology demonstrates a hydrophilic dependency, making locations with natural water bodies (e.g., lakes, rivers) or artificially-hydrated indoor/outdoor venues. Third, the dispersion of atomized water droplets may cause discomfort and clothing dampness for audiences. Fourth, the capacity of water pumps restricts the size of the water curtain, directly limiting the display area and performance duration. Fifth, the imaging system exhibits optical performance limitations, such as low resolution, limited luminous intensity, and susceptibility to interference from ambient lighting (see Fig. 8). Finally, the constrained viewing angles reduce the number of optimal viewing spots.

Fog curtain projection technology shares a similar technical principle with water curtain projection by utilizing particles in the fog medium, as carriers to create an illusory stereoscopic visual effect. This technology exhibits the following two key characteristics. First, due to the transient nature of the fog screen, audiences can freely walk through it and interact with the virtual images, making it suitable for applications in stage performances for low-light and nighttime environments. Second, with a typical projection height ranging from 1 to 1.5 m, the system employs integrated ultrasonic movement instead of traditional mechanical drives. This setup not only enables efficient atomization and the release of high-concentration negative ions, but also offers operational advantages such as low noise, low failure rates, and ease of maintenance.

3.4 Floating 3D display technology

Floating 3D display technology refers to an optical technique that generates dynamic volumetric imagery in free space. Its core principle relies on light field reconstruction or leveraging persistence of vision, to transform 2D or 3D image source data into optical projections with depth perception [47, 48]. This technology integrates optical engineering, computer vision, and human visual perception mechanisms, requiring high-precision modulation of light wave phase, amplitude, and propagation paths to achieve suspended display effects with physical depth and multi-angle visibility [49].

Based on the fundamental principles and practical applications, this paper proposes six methods for floating 3D display technology, including drones-based floating stereoscopic display, floating stereoscopic display of negative refractive index materials, floating stereoscopic display of microchannel matrix optical waveguide, floating stereoscopic display of retro-reflective film, plasma-based floating stereoscopic display, and acoustic wave-based floating stereoscopic display [47]. The detailed features of six display methods are listed in Table 5.

The features of drones-based floating stereoscopic display, floating stereoscopic display of negative refractive index materials, floating stereoscopic display of microchannel matrix optical waveguide, floating stereoscopic display of retro-reflective film, plasma-based floating stereoscopic display, and acoustic wave-based floating stereoscopic display [47–59].

3.4.1 Floating 3D display of drones

The application of drones-based floating 3D display achieves a significant leap in the realm of night-time entertainment, integrating cutting-edge technology with creative expression, as shown in Figures 9 and 10. Unlike traditional fireworks, which are limited to brief bursts of light and sound, drone light shows offer dynamic, programmable display capable of lasting longer, changing shapes, and even interacting with their environment in real-time. These performances rely on sophisticated algorithms and precise synchronization to control hundreds, and in some cases thousands, of drones simultaneously, creating fluid and captivating 3D patterns and animations. The drones are equipped with LED lights that can change color and brightness, enhancing the visual depth and detail of the images. This technology allows for an unprecedented level of customization and creativity, with the ability to depict complex scenes information, and even mimic shapes and logos in mid-air. Compared to traditional fireworks that produce smoke, debris, and noise, drone light shows are more eco-friendly. As a result, they have gained widespread popularity worldwide, particularly in settings such as national celebrations, large-scale concerts, sporting events, and cultural festivals. The adaptability, precision, and sustainability of drone light displays make them an attractive choice for modern event organizers seeking to create memorable experiences [48].

|

Figure 9 Drone show of a hand vs. a gift, Xiamen, China, January 2026 (source: authors). |

|

Figure 10 Drone show of Chinese characters, Xiamen, China, January 2026 (source: authors). |

In 2025, a drone performance in Chongqing demonstrated core advantages in achieving highly reliable and precise swarm control of an ultra-large-scale formation – comprising 11,787 drones – within the complex urban mountainous environment, showcasing world-leading capabilities in collaborative aerial technology [49]. Its innovation is characterized by dual breakthroughs in both technical methodology and artistic expression. The “dual-formation” control architecture successfully transforms abstract cultural elements, such as classical Chinese poetry, the city flower, and the spirit of Chongqing, into dynamic, 3D aerial imagery, thereby achieving a profound integration of cutting-edge technology with rich cultural heritage. The initiative holds significant practical value. It establishes a global benchmark for integrating culture and tourism in the emerging low-altitude economy, attracting clusters of high-value industries. Moreover, through the spectacular blend of “technology and culture”, it enhances Chongqing’s image as a dynamic, innovative, and internationally appealing city. This achievement significantly accelerates the shift of drone swarm technology from laboratory demonstrations to practical industrial applications, establishing an advanced model that enhances urban soft power.

To ensure that thousands of drones can execute pre-designed 3D animations at an altitude of 100 meters, this system integrates expertise across multiple fields, such as multi-rotor aircraft, automatic control, wireless communication, flight path planning, 3D modeling, and LED programming. During the design phase, text and images are incorporated into 3D animations, with specialized software and algorithms generating unique flight paths for each drone. Each drone accurately determines its position in the air, ensuring vivid and detailed animations through precise positioning. As the number of drones increases, the complexity and richness of the images are enhanced accordingly. However, higher drone counts also lead to exponential growth in communication data volume, imposing greater demands on back-end processing and analytical capabilities [48].

In 2025, Martin Schuck et al. pioneered the use of a large language model (LLM) as a “choreographer” for drone swarm performances, enabling users to intuitively describe dance movements in natural language while the system autonomously generates synchronized drone trajectories, as shown in Figure 11 [50]. By decoupling high-level artistic expression (conveyed through language) from low-level motion planning (ensured through safety control), the system significantly lowers the technical barrier for designing choreography. This technology holds promising potential for applications in large-scale events, including concerts, sports competitions, opening ceremonies, and light shows.

|

Figure 11 (a) A long-exposure image shows 12 drones transitioning from a helix to a spiral motion in sync with a musical beat. (b) A close-up view of one of the drones during the performance [50]. |

Unlike the earlier market, which was largely driven by government clients, cultural tourism projects and tourist attractions are now becoming the main focus of the drones show market. In 2021, these projects made up 36% of the market share. Research shows that the global drones show market was valued at around $170 million in 2021 and is expected to grow to $719 million by 2028.

3.4.2 Floating 3D display of negative refractive index materials

This technology has revolutionized traditional display and human-computer interaction through its core innovation, the negative refractive index flat lens. By utilizing light field reconstruction, it can reassemble scattered light in the sky to produce real images without a physical medium. With interactive control technology, it allows direct interaction between people and real images in the air. A micro-array structure exhibiting negative refraction properties has been designed, which enables periodic modulation and focusing of the optical path without relying on a curved surface, as shown in Figure 12. All light rays within the light source’s divergence angle converge to the axis-symmetric position after passing through the flat lens, creating a 1:1 real image and enabling aerial display [51].

|

Figure 12 The display principle of medium-free 3D technology relies on a microchannel matrix optical waveguide plate and negative-index materials. |

A flat film exhibiting a negative refractive index appears externally as a single sheet of glass but is in fact composed of a dense array of microscopic lenses. The main technical challenge in fabricating such optical glass lies in its highly compact lattice and grating structure. The microlens array gives rise to a “negative refraction” phenomenon, which causes diverging light rays to converge symmetrically onto a flat plane. This process produces a life-sized 3D image and enables medium-free aerial display.

3.4.3 Floating 3D display of microchannel matrix optical waveguide

Floating 3D display, as the term suggests, is a method for generating 3D images without the need for a physical medium. At the core of this technology lies the micro-channel matrix optical waveguide plate (MOW-plate), a nanoscale display material derived from advances in nano-optics. This material reconstructs the light field through an optical micro-mirror structure, producing a real image that can be interactively displayed in mid-air, as shown in Figure 12 [52, 53]. The integration of interactive algorithms with user experience design forms the foundation of medium-free 3D display technology, as depicted in Figure 13.

|

Figure 13 (a) Principle of a retro-reflective surface, and (b) principle of a cube-corner retro-reflector [54]. |

The MOW-plate is crucial to the realization of this technology. Its optical micro-mirror structure can capture the intensity, angle, wavelength, and other properties from each light beam of the physical light source onto the light plate in the “object space”. It then reproduces this light, faithfully mirroring it into the “image space” on the opposite side of the array. These replicated rays are then refocused to form a real image at the symmetrical position between the “object space” and the “image space”. This image is a complete mirror of the original, medium-free, and visible to the naked eye.

3.4.4 Floating 3D display of retro-reflective film

The principle of a retro-reflective surface is illustrated in Figure 13a [54]. An optical ray incident on the surface with a direction (α, β) is reflected in the same direction within a narrow diffusion cone (ψ, φ). Two primary solutions exist to achieve this specific geometrical effect. The first involves the use of microbeads, where the incident optical ray is focused and reflected from the rear surface of a spherical lens. This solution is commonly employed in safety jackets but suffers from relatively low efficiency and cannot be easily encapsulated. The second, more widely adopted solution – particularly in road signage – is the cube corner geometry. Figure 13b illustrates the operating principle of this optical element, which consists of three mirrors arranged mutually perpendicular to one another [54]. Due to the geometric configuration, an incident light ray that enters the element and undergoes successive reflections from each mirror is returned along a path parallel to its original direction. This optical component has been well known and widely manufactured at the macroscopic scale for many years; however, its fabrication at the microscopic scale – particularly for the development of thin retro-reflective films – remains an active area of research. Finally, the retro-reflective ray regenerates a floating 3D image. As a cutting-edge 3D display technology, this floating display has a wide range of applications, such as advertising, entertainment, educational training, and navigation systems. By imaging various content forms in mid-air, this technology provides a unique visual experience that captures increased attention and interest [55, 56].

In 2020, Christophe Martinez proposed a 360° volumetric display system based on a transparent film with a sparse cube corner array. By modifying the Pepper’s ghost optical configuration, images are projected onto a retro-reflective transparent surface with high angular selectivity, creating floating virtual imagery as shown in Figure 14 [54]. The system enables multi-user, multi-view stereoscopic viewing without moving parts, offering high brightness, high transparency, and low-cost advantages, with broad application potential in artistic exhibitions, remote collaboration, and augmented reality.

|

Figure 14 (a) Geometrical configuration of the retro-reflective projection system module, (b) view of the module showing the retro-reflective projection of a virtual retro-reflected image at a viewing angle close to 0°, and (c) side view of the optical module [54]. |

In the fast-growing realm of the metaverse, this floating 3D display serves as a pivotal technology, enabling the seamless integration of virtual and real environments. It offers unique advantages in several aspects. First, it delivers seamless integration. Unlike VR, which creates a fully virtual setting, medium-free 3D technology merges virtual images with physical objects and actual surroundings, creating a unique spatiotemporal experience. Second, it significantly enhances user experience by addressing typical constraints of VR and AR – such as spatial limitations, discomfort from wearable devices, motion sickness, and reliance on dynamic compensation. Through the synergy of sensing technology, content creation, and intelligent algorithms, it delivers more adaptable and immersive interactions. Third, it enables wearable-free interaction. While VR and AR often require users to wear devices for both visualization and engagement, floating 3D technology integrates virtual images directly into the environment without the need for user-worn hardware.

3.4.5 Floating 3D display of plasma

In 2015, researchers at the University of Tsukuba in Japan introduced a breakthrough technology that creates 3D plasma pixels in mid-air using femtosecond laser pulses, enabling interactive 3D images that respond to human touch [57]. This floating 3D display technology, known as “Fairy Lights” offers a promising new mode of interaction for the future as shown in Figure 15.

|

Figure 15 Application images of Fairy Lights in Femtoseconds – three-dimensional, aerial, and volumetric graphics displayed in mid-air through the use of femtosecond laser technology [57]. |

The plasma-based floating display system uses precisely calibrated lasers to selectively ionize air molecules, producing white light. The team also integrated a touch function, making the 3D system both visible and interactive to touch. The system works by directing a laser beam at specific points in the air, ionizing the gas and creating floating plasma. Earlier prototypes used nanosecond laser pulses, which overheated the plasma and caused skin burns [57].

The updated technology uses femtosecond laser pulses, which generate shorter plasma bursts at higher frequencies, preventing prolonged focus on one area and avoiding skin burns. The brightness increases upon touch, and this effect has been incorporated into the system’s touch functionality. When the hand touches the projected image, the system recognizes it instantly, images different pictures, and even simulates a sense of physical presence.

3.4.6 Floating 3D display of acoustic wave

In 2019, researchers at the University of Sussex in the UK developed a novel technology called the multi-modal acoustic trap display (MATD). Using ultrasonic speakers to manipulate tiny particles, MATD can simultaneously deliver visual, auditory, and tactile content, creating a floating display that changes shape in mid-air [58, 59]. The manipulation of particle morphing in the air is achieved by a simple device: two slim arrays of 256 tiny speakers that move particles using ultrasonic waves. The rapid movement of these particles creates a 3D image several centimeters wide, perceived by the naked eye as a swiftly shifting geometric form in the air.

In the report, lead researcher Ryuji Hirayama demonstrated programs that allowed him to control suspended particles – white blobs that rose and then hovered motionless in mid-air. With another tap, the blob transformed into a glowing butterfly, its wings still fluttering within the black box. Interestingly, the ultrasonic speakers not only create images but also produce sound and enable tactile sensations. When you touch the butterfly, you can feel a subtle vibration on your fingers. The speakers are positioned on both sides of the display, limiting the viewer’s ability to interact with it and constraining the display’s size. However, with hardware upgrades, Subramanian stated that a different type of sound wave could be used, allowing the speakers to generate images using only one side [59].

The fundamental approach of this display is fundamentally different from technologies like holograms, virtual reality, and polarized stereoscopic display. These familiar technologies rely on light to create an illusion of depth, providing viewers with a sense of realism. However, holograms can only be witnessed from specific angles, virtual reality and polarized stereoscopic display necessitate helmets or glasses, and all of these technologies can induce eye strain.

Although the two aforementioned technologies have made significant breakthroughs and are full of potential, they come with high costs, limited applications, and singular display effects. Currently, they remain in the laboratory development phase, and their short-term commercial viability is unlikely to shape the future direction of this field.

3.5 Light field 3D display technology

This technology captures light field information (light ray direction, position, and intensity) to record and reconstruct the 3D structure of a scene, providing multi-angle, depth perception, and stereoscopic imagery. Light field data is typically represented by a 4D dataset (position and direction) [60].

Key technologies in light field 3D display include light field data capture, light field cameras, image reconstruction, and display technology. Light field data is captured using microlens arrays or multi-view camera arrays, which record both the spatial position and direction of each light ray. Devices like microlens-based cameras (e.g., Lytro) are used to capture multi-view light ray information through optical components. The captured data is then processed through computer algorithms to reconstruct 3D images, allowing for viewpoint adjustments and depth of field changes. For display, light field technology enables glasses-free 3D viewing and supports holographic projections as well as VR/AR devices for immersive experiences [61, 62].

The advantages of light field 3D display technology include high realism, glasses-free 3D effects, dynamic viewpoint control, and a wide range of applications. It provides rich 3D information and supports dynamic viewpoint adjustments, enhancing the overall realism of the experience. Additionally, it enables stereoscopic vision without the need for special equipment [63]. The technology also allows users to freely change viewpoints within a scene, making it ideal for virtual reality (VR) and augmented reality (AR) applications. Furthermore, light field 3D display is widely used in fields such as medical display, film production, advertising, and VR/AR.

In 2020, Hayato Watanabe et al. systematically reviewed full-parallax light-field 3D display technologies, focusing on three core methods: integral 3D display, Aktina Vision, and compressive light field display [64]. The integral 3D display features a simple structure and is suitable for thin devices; Aktina Vision achieves high pixel density and full parallax through multi-projection and a customized diffusing screen; the compressive light field display approximates light field reconstruction with relatively high pixel density via layered image stacking, though it faces challenges such as approximation errors and system complexity. The study highlights that high-quality light field displays still require massive image information, and future improvements may involve multi-device collaboration and time-division multiplexing, with promising applications in exhibitions, education, entertainment, and other fields.

In 2025, Cheng-Bo Zhao et al. optimized the beam divergence angle of a 2D display to ±2.7° using a compound microlens array, achieving high-precision beam directionality. Coupled with a flat-panel aspheric lenticular lens array for beam modulation, the system realizes a 100° wide viewing angle, crosstalk-free, full-color dynamic (30 Hz) light field 3D display as shown in Figure 16 [65]. With its compact structure and enhanced resolution, the system is suitable for multi-user immersive 3D visual applications in education, medical imaging, exhibitions, and beyond.

Looking Glass is unique in its ability to present group-viewable 3D media – without the need for headsets or special glasses as shown in Figure 17. Traditional glasses-wearing 3D systems typically only accommodate a single viewer and rely on uncomfortable or cumbersome accessories like headsets or require precise head tracking, which can lead to discomfort over long periods of use. Looking Glass displays are designed to offer a highly comfortable and accessible experience, allowing multiple viewers to simply look at a display to experience real 3D content at the same time [66].

|

Figure 17 A Light Field 3D image is displayed on a state-of-the-art 3D screen manufactured by Looking Glass Factory. Multiple observation angles become visible depending on the viewer’s real-world viewing angle [67]. |

“Looking Glass” display presents multiple images from various angles, creating the illusion for viewers that they are observing different observation angles of the same object. The “Looking Glass” display generates between 45 and 100 distinct images. Its operating principle involves the use of a specialized glass screen that forms a polarized layer. At the same time, software developed by FXG Company enables the display to output multiple images simultaneously, allowing viewers to experience video content from various viewpoints, thereby achieving an effect similar to glasses-free holography. Furthermore, this device provides a highly immersive interactive experience. By connecting an infrared sensor and positioning their hands above it, users can interact with the content on the holographic screen without the need for precise targeting, as is required by some other devices. However, it is important to note that this display system is relatively expensive and faces challenges when displaying text, as it must use large characters due to its lower resolution. Additionally, extended viewing of three-dimensional screens may cause visual fatigue [66, 67].

4 Future trends and challenges

Based on the above technical analysis, this study summarizes the development trends and challenges confronting immersive glasses-free stereoscopic display technologies, as detailed in Figure 18.

|

Figure 18 Future trends and challenges. |

(1) Core technological breakthroughs

Advanced stereoscopic display technologies are supported by critical breakthroughs in three foundational domains: the advancement in optical technologies and laser equipment that deliver brighter and higher-fidelity imagery [8, 68, 69]; R & D of novel display materials, such as metasurfaces, quantum dots, and advanced LEDs, enhancing the resolution, color range, and durability for next-generation systems [70, 71]; and sophisticated algorithmic optimization techniques, including AI-driven rendering and compression, which are essential for improving realism and computational efficiency [72].

(2) System integration and intelligence enhancement

System integration and intelligence enhancement are propelling stereoscopic displays to break through the visual spectacle by leveraging core enabling technologies. This involves utilizing AI for real-time, context-aware content optimization [36, 73]; incorporating interactive systems such as gesture recognition and AR to foster active audience participation [74–76]; and converging with audio, haptic, and other sensory technologies to create a comprehensive, immersive multi-sensory experience [5].

(3) Engineering and product realization

Engineering and product realization of stereoscopic display technology focuses on transforming core technologies into viable commercial products. It encompasses three key thrusts: miniaturized components that enhance portability and versatility for diverse scenarios while reducing setup time and costs [7]; robust, sustainable materials ensuring long-term reliability in public spaces with minimal maintenance requirements [7]; and cost optimization through large-scale commercialization to accelerate the adoption across advertising, retail, and events [9].

(4) Market and application expansion

The market deployment of stereoscopic display technology follows a clear and defined evolutionary path. First, attempts should be made to achieve large-scale application and popularization in professional fields such as medical imaging and industrial design, along with the consumer electronics market. Second, through the integration with AI and Digital Twins, the application scenarios expand into emerging domains such as the industrial metaverse and smart city infrastructure, enabling cross-industry penetration. Third, the progression drives the maturation of the entire industry chain, by establishing unified technical standards, refining content creation tools, and fostering a vibrant developer ecosystem. Ultimately, a sustainable and thriving application environment will be built for the long-term technological development.

5 Conclusions

Novel immersive glasses-free stereoscopic display technologies transcend the physical boundaries of traditional exhibition formats, reshaping the interactive relationships between exhibits and audiences, and facilitating a transition from “observation” to “immersion”. Exhibitions and artistic expressions have evolved from fixed spatial configurations and static displays toward the convergence of fluid space design and dynamic visuals, creating a super-subjective field of experience. The multidimensional relationships among audiences, exhibits, and settings are technologically reconfigured, forming a visual ecosystem marked by spatiotemporal variability, real-time interactivity, and irreproducibility, thereby constructing an open, participatory platform for value creation in the future exhibition industry.

This paper systematically investigates the technical implementation principles and specific application scenarios of novel immersive glasses-free stereoscopic display technologies in the modern exhibition industry. Through an analysis of holographic 3D display, optical illusion display, projection stereoscopic display, floating 3D display, and light-field 3D display, a comprehensive comparison of their technical characteristics and applicability is conducted. Current application limitations and future development trends are discussed. The findings of this study indicate that immersive glasses-free stereoscopic display technologies can reconstruct 3D environments with realistic motion parallax and depth cues in venues, such as exhibition centers and museums, thereby enabling multi-user interactive experiences without auxiliary devices. These technologies not only provide crucial support for the innovation of exhibition product forms and the optimization of service contents, but also serve as a key technological driving force for the informatization, digitalization, and intelligent transformation in the exhibition industry. Their application can promote the systematic reconstruction of industry organizational structure and business processes, while also laying a solid technical foundation for the sustainable development and large-scale implementation of immersive experiences within the modern exhibition systems.

Funding

This research was funded by the Fujian Provincial Innovation Strategy Research Plan Project (Grant No. 2025R0102) entitled “Immersive cultural tourism Experience Scenarios and Industrial Integration Pathways in Fujian Province”, and Fujian Provincial Social Science Planning Project (Grant No. FJ2023MGCA024, Key Program) entitled “Dynamic mechanism and path of transformation and upgrading for Fujian convention and exhibition industry in the trend of immersive experience”.

Conflicts of interest

The authors declare that there are no conflicts of interest.

Data availability statement

Data underlying the results presented in this paper are not publicly available at this time but may be obtained from the authors upon reasonable request.

Author contribution statement

Conceptualization, Xiao Liu and Hong-Yi Lin; methodology, Xiao Liu; validation, Run-Xuan Zhai and Hong-Yi Lin; formal analysis, Xiao Liu and Zi-Chen Zhang; investigation, Run-Xuan Zhai , and Hong-Yi Lin; resources, Run-Xuan Zhai; Liang-Qin Gan and Xiao Liu; writing – original draft preparation, Xiao Liu and Hong-Yi Lin; writing – review and editing, Xiao Liu and Hong-Yi Lin; visualization, Liang-Qin Gan and Hong-Yi Lin; supervision, Liang-Qin Gan and Xiao Liu; project administration, Xiao Liu; funding acquisition, Xiao Liu. All authors have read and agreed to the published version of the manuscript.

References

- Huang ZF, Zhang Z, Li T, et al., Digital empowerment for the deep integration of culture and tourism: Theoretical logic and research framework, Tour. Sci. 38, 1–16 (2024). https://lykx.sitsh.edu.cn/CN/Y2024/V38/I1/1. [Google Scholar]

- Hua J, Chen QH, Immersive experience: A new business form of culture and technology, J. Shanghai Univ. Finance Econ. 21, 18–32 (2019). https://doi.org/10.16538/j.cnki.jsufe.2019.05.002. [Google Scholar]

- Karnchanapayap G, Activities-based virtual reality experience for better audience engagement, Comput. Hum. Behav. 146, 107796 (2023). https://doi.org/10.1016/j.chb.2023.107796. [Google Scholar]

- Park J, Kang H, Huh C, Lee MJ, Do immersive displays influence exhibition attendees’ satisfaction?: A stimulus-organism-response approach, Sustainability 14, 6344 (2022). https://doi.org/10.3390/su14106344. [Google Scholar]

- Yao J, Wang L, Pang Q, Fang M, Coupling coordination and spatial analyses of the MICE and tourism industries: Do they fit well? Curr. Issues Tour. 27, 2783–2796 (2024). https://doi.org/10.1080/13683500.2023.2240473. [Google Scholar]

- Trunfio M, Jung T, Campana S, Mixed reality experiences in museums: Exploring the impact of functional elements of the devices on visitors’ immersive experiences and post-experience behaviours, Inf. Manag. 59, 103698 (2022). https://doi.org/10.1016/j.im.2022.103698. [Google Scholar]

- Liu X, Wen ZW, Song S, et al., Speckle-reduced green and yellow-green Nd:YVO4(YAG)/PPMgLN lasers for cinema exhibition industry, Optik 243, 167427 (2021). https://doi.org/10.1016/j.ijleo.2021.167427. [Google Scholar]

- Liu X, Zeng XT, Shi WJ, et al., Application of a novel Nd:YAG/PPMgLN laser module speckle-suppressed by multi-mode fibers in an exhibition environment, Photonics 9, 46 (2022). https://doi.org/10.3390/photonics9010046. [Google Scholar]

- Houston J, Beck W, Design considerations for cinema exhibition using RGB laser illumination, SMPTE Motion Imaging J. 124, 26–41 (2015). https://doi.org/10.5594/j18547. [Google Scholar]

- Bruno F, Bruno S, De Sensi G, et al., From 3D reconstruction to virtual reality: A complete methodology for digital archaeological exhibition, J. Cult. Herit. 11, 42–49 (2010). https://doi.org/10.1016/j.culher.2009.02.006. [Google Scholar]

- Pratisto EH, Thompson N, Potdar V, Immersive technologies for tourism: A systematic review, Inf. Technol. Tour. 24, 181–219 (2022). https://doi.org/10.1007/s40558-022-00228-7. [Google Scholar]

- Collin-Lachaud I, Passebois J, Do immersive technologies add value to the museum-going experience? An exploratory study conducted at France’s Paleosite, Int. J. Arts Manag. 11, 60–71 (2018). https://www.jstor.org/stable/41064975. [Google Scholar]

- Ku ECS, Embracing the future of interactive marketing with contactless technology: Evidence from tourism businesses, J. Res. Interact. Mark. 19, 1 (2024). https://doi.org/10.1108/JRIM-04-2024-0183. [Google Scholar]

- The Editors of Encyclopaedia Britannica, Interactive Media, (2025). https://www.britannica.com/technology/interactive-media (accessed 2 February 2025). [Google Scholar]

- Zimeng G, Wei Y, Qiuxia C, Xiaoting H, The contribution and interactive relationship of tourism industry development and technological innovation to the informatization level: Based on the context of low-carbon development, Front. Environ. Sci. 11, 999675 (2023). https://doi.org/10.3389/fenvs.2023.999675. [Google Scholar]

- Hua J, Zhou F, Xia Z, et al., Large-scale metagrating complex-based light field 3D display with space-variant resolution for non-uniform distribution of information and energy, Nanophotonics 12, 285–295 (2023). https://doi.org/10.1515/nanoph-2022-0637. [Google Scholar]

- Khuderchuluun A, Erdenebat MU, Dashdavaa E, et al., Comprehensive optimization for full-color holographic stereogram printing system based on single-shot depth estimation and time-controlled exposure, Opt. Laser Technol. 181, 111966 (2025). https://doi.org/10.1016/j.optlastec.2024.111966. [Google Scholar]

- Wang T, Yang C, Chen J, et al., Naked-eye light field display technology based on mini/micro light emitting diode panels: A systematic review and meta-analysis, Sci. Rep. 14, 24381 (2024). https://doi.org/10.1038/s41598-024-75172-z. [Google Scholar]

- Liu S, Li Y, Su Y, Recent progress in true 3D display technologies based on liquid crystal devices, Crystals 13, 1639 (2023). https://doi.org/10.3390/cryst13121639. [Google Scholar]

- Gao HY, Yao QX, Liu P, et al., Latest development of display technologies, Chin. Phys. B 25, 094203 (2016). https://doi.org/10.1088/1674-1056/25/9/094203. [Google Scholar]

- Hoffman DM, Girshick AR, Akeley K, Banks MS, Vergence–accommodation conflicts hinder visual performance and cause visual fatigue, J. Vision 8, 33 (2008). https://doi.org/10.1167/8.3.33. [Google Scholar]

- Fattal D, Peng Z, Tran T, et al., A multi-directional backlight for a wide-angle, glasses-free three-dimensional display, Nature 495, 348–351 (2013). https://doi.org/10.1038/nature11972. [Google Scholar]

- Blackwell C, Can C, Khan J, et al., Volumetric 3D display in real space using a diffractive lens, fast projector, and polychromatic light source, Opt. Lett. 44, 4901–4904 (2019). https://doi.org/10.1364/OL.44.004901. [Google Scholar]

- Trolinger JD, The language of holography, Light: Adv. Manuf. 2, 34 (2021). https://doi.org/10.37188/lam.2021.034. [Google Scholar]

- Liu X, Yang HX, Lin HY, et al., Application of laser holographic technology in modern exhibitions and shows, Laser Infrared 52, 787–795 (2022). https://doi.org/10.3969/j.issn.1001-5078.2022.06.001. [Google Scholar]

- An J, Won K, Kim Y, et al., Slim-panel holographic video display, Nat. Commun. 11, 5568 (2020). https://doi.org/10.1038/s41467-020-19298-4. [Google Scholar]

- Zhou P, Li Y, Chen CP, et al., 30.4: Multi-plane holographic display with a uniform 3D Gerchberg-Saxton algorithm, SID Symp Dig. Tech. Pap. 46, 442–445 (2015). https://doi.org/10.1002/sdtp.10411. [Google Scholar]

- Shi L, Li B, Kim C, et al., Towards real-time photorealistic 3D holography with deep neural networks, Nature 591, 234–239 (2021). https://doi.org/10.1038/s41586-020-03152-0. [Google Scholar]

- Li J, Smithwick Q, Chu D, Holobricks: Modular coarse integral holographic displays, Light Sci. Appl. 11, 57 (2022). https://doi.org/10.1038/s41377-022-00742-7. [Google Scholar]

- Panteliadou P, Optical illusions in architecture: Towards a novel classification of architectural works, Int. J. Image 2, 127–142 (2012). https://doi.org/10.18848/2154-8560/CGP/v02i02/44037. [Google Scholar]

- Art & Architecture. Millennium Park Foundation (2026). Retrieved January 15, 2026, from www.millenniumparkfoundation.org/art-architecture. [Google Scholar]

- Cheng JH, Lin HH, Design and development of interactive LED display for fan applications, in: Proceedings of the 2018 IEEE International Conference on Applied System Invention (ICASI), Chiba, Japan, 13–17 April 2018 (IEEE, New York, 2018), pp. 393–396. https://doi.org/10.1109/ICASI.2018.8394265. [Google Scholar]

- Ortega MXP, Ramírez JCD, Castro JWV, et al., Application of the technical-pedagogical resource 3D holographic LED-fan display in the classroom, Smart Learn. Environ. 7, 32 (2020). https://doi.org/10.1186/s40561-020-00136-5. [Google Scholar]

- Cultural tourism projects. Hunan Ruyi Culture Technology Co., Ltd. (2026). Retrieved January 15, 2026, from https://www.ruyuan3d.com/en/cultural-tourism-projects.htm. [Google Scholar]

- Jia-yuan L, Nian-yu Z, Jing L, et al., Trend of action on the display effect based on Pepper’s ghost images affected by illumination and color temperature from LED light sources, Chin. Opt. 16, 339–347 (2023). https://doi.org/10.37188/co.2022-0027. [Google Scholar]

- Trajkova M, Desphande M, Knowlton A, Cassandra Monden, Duri Long, Brian Magerko. AI Meets Holographic Pepper’s Ghost: A Co-Creative Public Dance Experience. DIS ’23 Companion: Companion Publication of the 2023 ACM Designing Interactive Systems Conference, 2023, 274–278. https://doi.org/10.1145/3563703.35966. [Google Scholar]

- Trajkova M, Desphande M, Knowlton A, et al., AI meets holographic Pepper’s ghost: A co-creative public dance experience, in: Proceedings of DIS Companion ’23, Pittsburgh, PA, USA, 10–14 July 2023 (ACM, New York, 2023), 274–278. https://doi.org/10.1145/3563703.3596658. [Google Scholar]

- Chlubna T, Milet T, Zemčík P, Out-of-focus artifacts mitigation and autofocus methods for 3D displays, Vis. Inform. 9, 31–42 (2025). https://doi.org/10.1016/j.visinf.2024.12.001. [Google Scholar]

- Watanabe H, Okaichi N, Omura T, et al., Aktina Vision: Full-parallax three-dimensional display with 100 million light rays, Sci. Rep. 9, 17688 (2019). https://doi.org/10.1038/s41598-019-54243-6. [Google Scholar]

- Suh YW, Oh J, Kim HM, et al., Three-dimensional display-induced transient myopia and the difference in myopic shift between crossed and uncrossed disparities, Invest. Ophthalmol. Vis. Sci. 53, 5029–5032 (2012). https://doi.org/10.1167/iovs.12-9588. [Google Scholar]

- Naked eye 3D series. Ningbo Weizhen (2026). Retrieved January 15, 2026, from http://www.wz-xs.com/en/Content/625367.html. [Google Scholar]

- Liu X, Li H, The progress of light-field 3-D displays, Inf. Disp. 30, 6–14 (2014). https://doi.org/10.1002/j.2637-496x.2014.tb00760.x. [Google Scholar]

- Jian L, Qiudong Z, Si C, et al., New type of glasses-free suspending stereoscopic display system, Opt. Tech. 39, 323–327 (2013). https://doi.org/10.3788/gxjs20133904.0323. [Google Scholar]

- Bogaert L, Meuret Y, De Smet H, Thienpont H, Analysis of two novel concepts for multiview three-dimensional displays using one projector, Opt. Eng. 49, 127401 (2010). https://doi.org/10.1117/1.3524240. [Google Scholar]

- Hwang J, Shin J, Lee D, et al., A living lab approach for concurrent 3D documentation of an architectural renovation project – a showcase of Gunsan Civic Cultural Center, Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. XLVIII-2/W4, 227–232 (2024). https://doi.org/10.5194/isprs-archives-xlviii-2-w4-2024-227-2024. [Google Scholar]

- Sohn Y, Park Y, Lin L, Jung M, ’Eternal Recurrence’: Development of a 3D water curtain system and real-time projection mapping for a large-scale systems artwork installation, Digit. Creat. 31, 133–142 (2020). https://doi.org/10.1080/14626268.2020.1762664. [Google Scholar]

- Nar D, Kotecha R, Optimal waypoint assignment for designing drone light show formations, Results Control Optim. 9, 100174 (2022). https://doi.org/10.1016/j.rico.2022.100174. [Google Scholar]

- Okuda S, Hashimoto N, Projection mapping using a rotating volumetric 3D display, Proc. SPIE 11766, 117661O (2021). https://doi.org/10.1117/12.2591029. [Google Scholar]